Johannes Ackermann

I am on the job market for postdocs or industry positions starting Fall 2026 or Spring 2027, please reach out if you have a position matching my interests.

I am a final-year PhD student at the University of Tokyo, working on Reinforcement Learning supervised by Masashi Sugiyama, and a part-time researcher at RIKEN AIP.

I previously interned at Sakana AI and at Preferred Networks, worked on applied ML at Huawei, obtained a B.Sc. and M.Sc. in Electrical Engineering and Information Technology from the Technical University of Munich and wrote my Master’s Thesis at ETH Zurich’s Disco Group.

My main research interest is the nature of rewards in RL post-training.

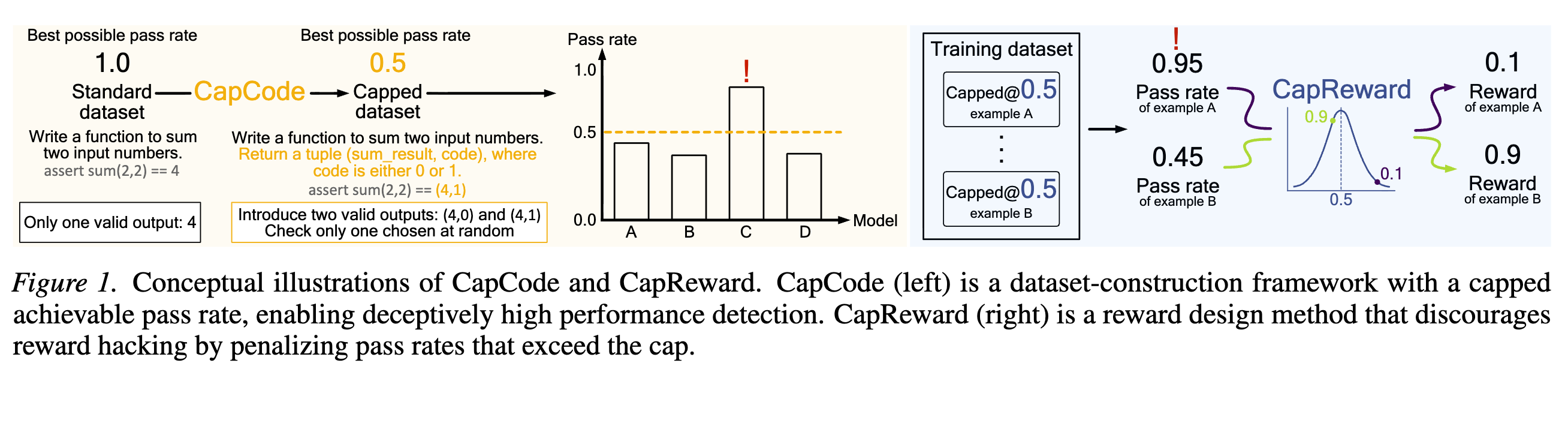

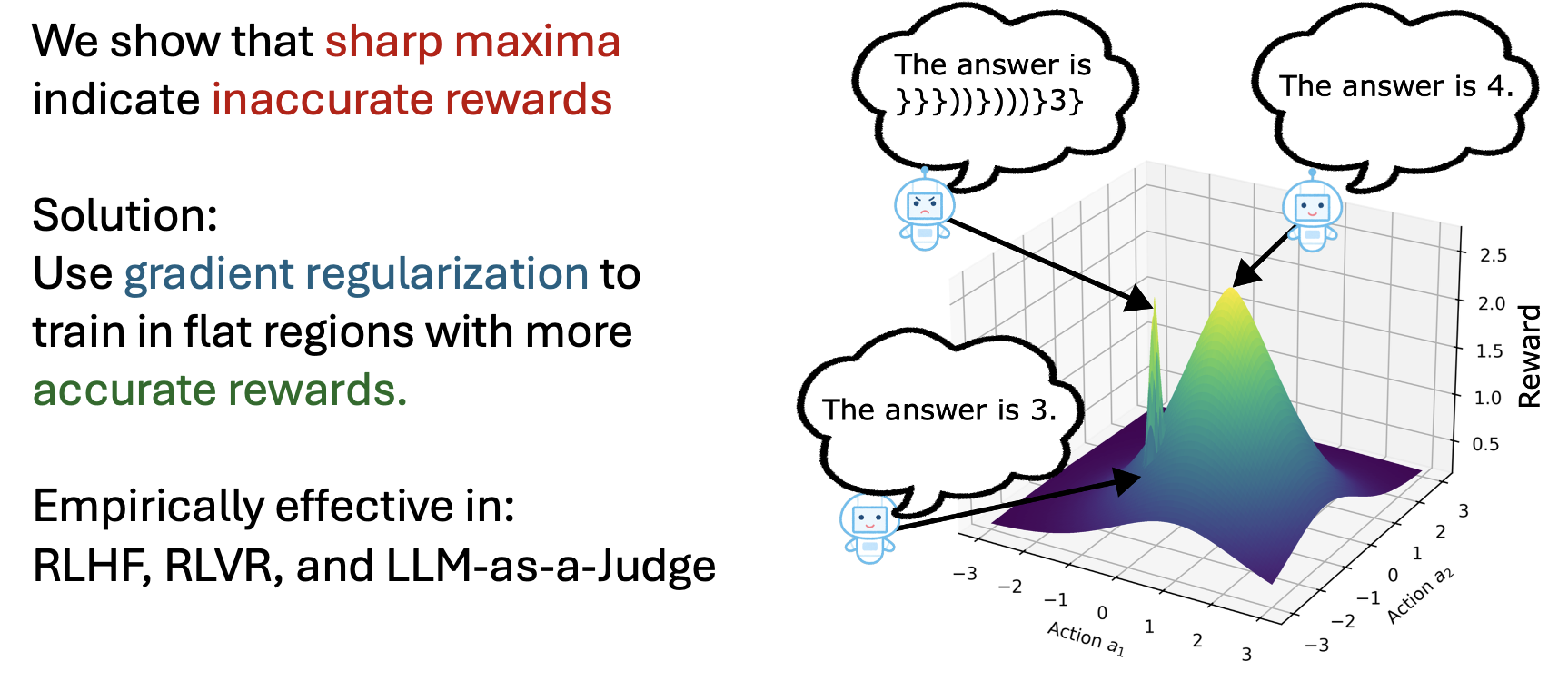

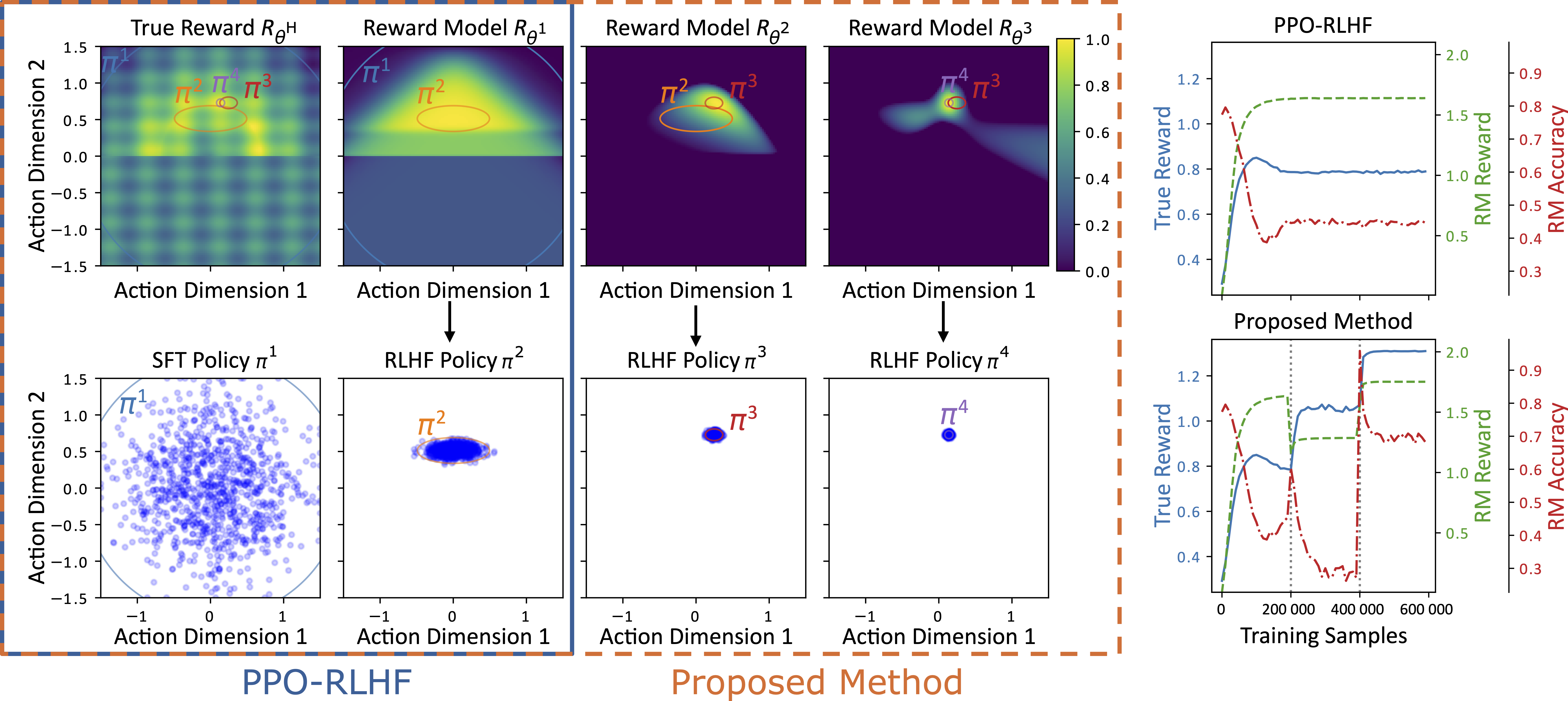

- Reward Hacking / Reward Specification in LLM post-training: Desired behaviors for LLMs are often complex to specify via rule-based rewards and thus often use reward models or other LLMs as judges. These are naturally imperfect and we need to account for their imperfections in our training methods. In our recent publication [ICML1] we rephrased post-training as trying to maximize both reward and reward accuracy. We then show that flatness is connected to reward accuracy and gradient regularization can thus be used to preserve reward accuracy in both RLHF and RLVR tasks! We also previously showed that reward models learned from human preferences need off-policy corrections [COLM1] during training. We also have two forthcoming papers about reward hacking through a robust optimization lens [ICMLWorkshop1] and specifically for coding tasks through a Bayes error perspective [ICMLWorkshop2].

But I have also taken on a few other directions over the years:

-

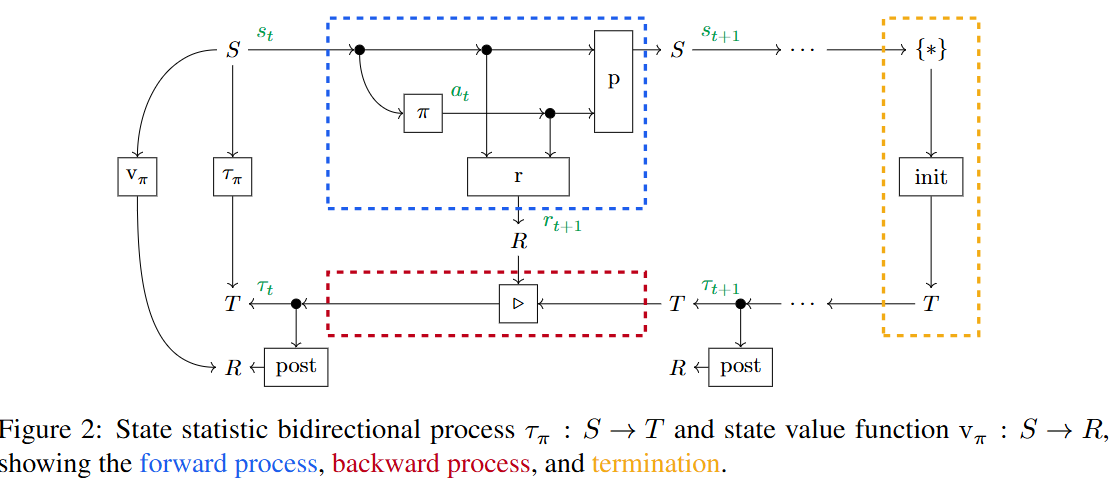

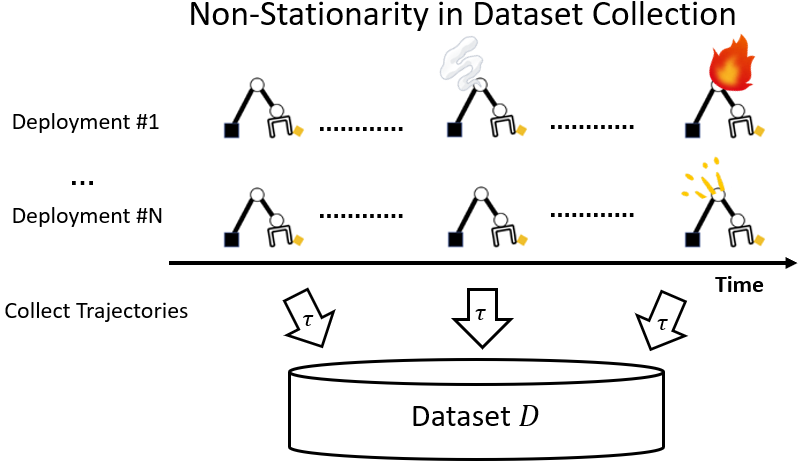

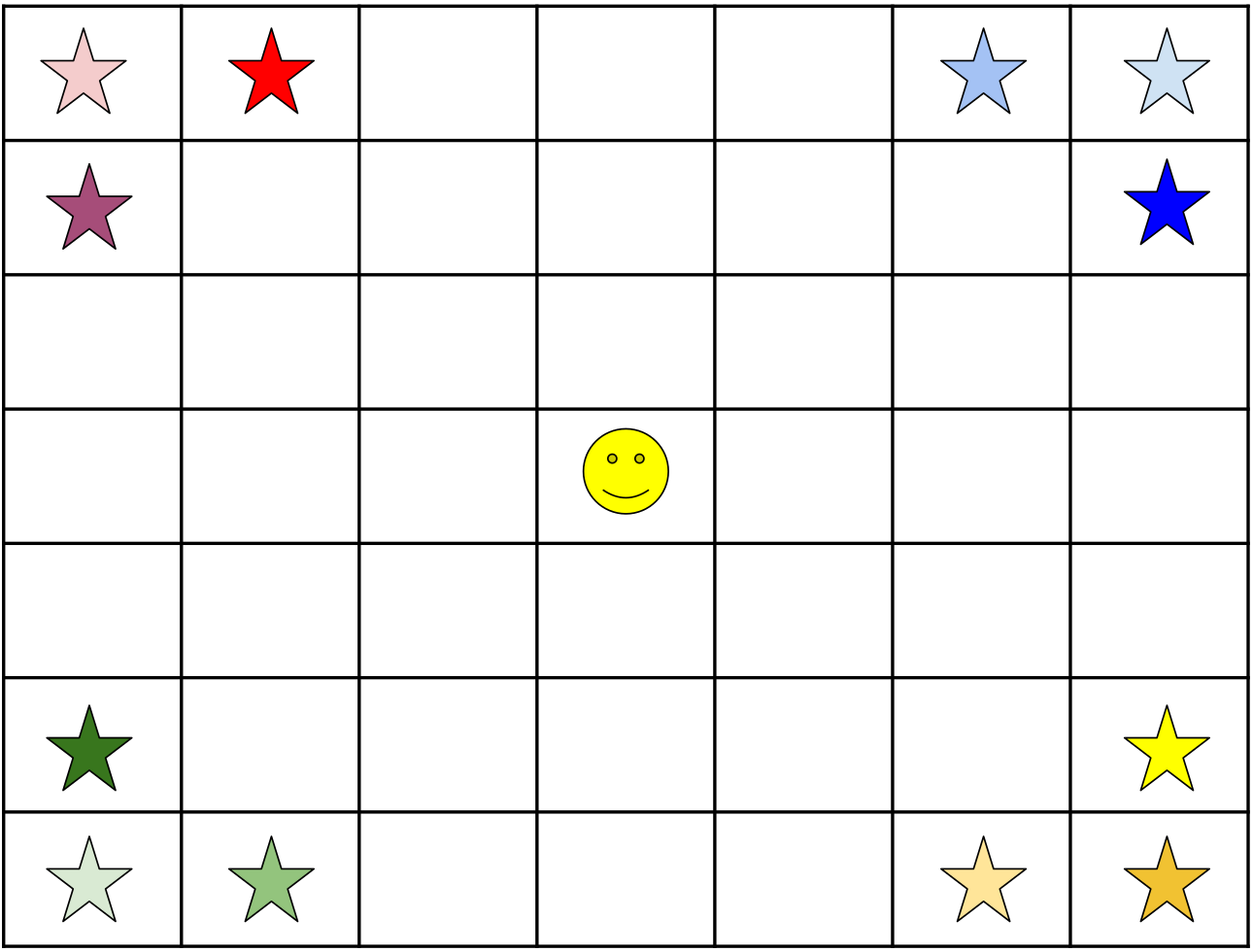

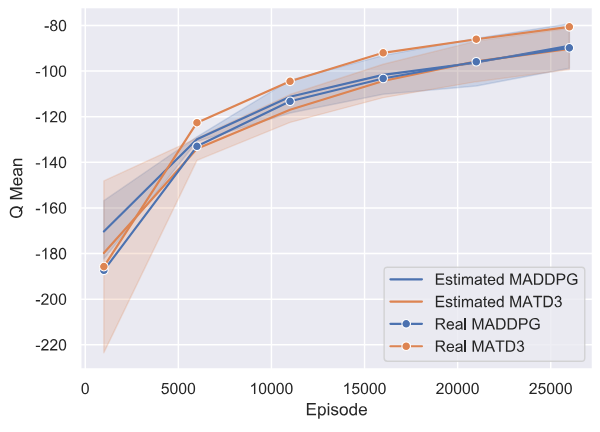

Changing or Structured Tasks: How can we deal with changing tasks during dataset collection for Offline RL [RLC1] or changing dynamics shift during deployment [RLC2]? In Multi-Task RL, all tasks are usually treated as equally (dis)similar. Can we instead identify and use task relations, by learning continuous task spaces [Thesis, Chapter 3] or task clusterings [ECML-PKDD1]? I also (co-)investigated different ways to accumulate rewards, beyond the simple discounted sum [RLC3], such as range, min, max, variance, etc.

-

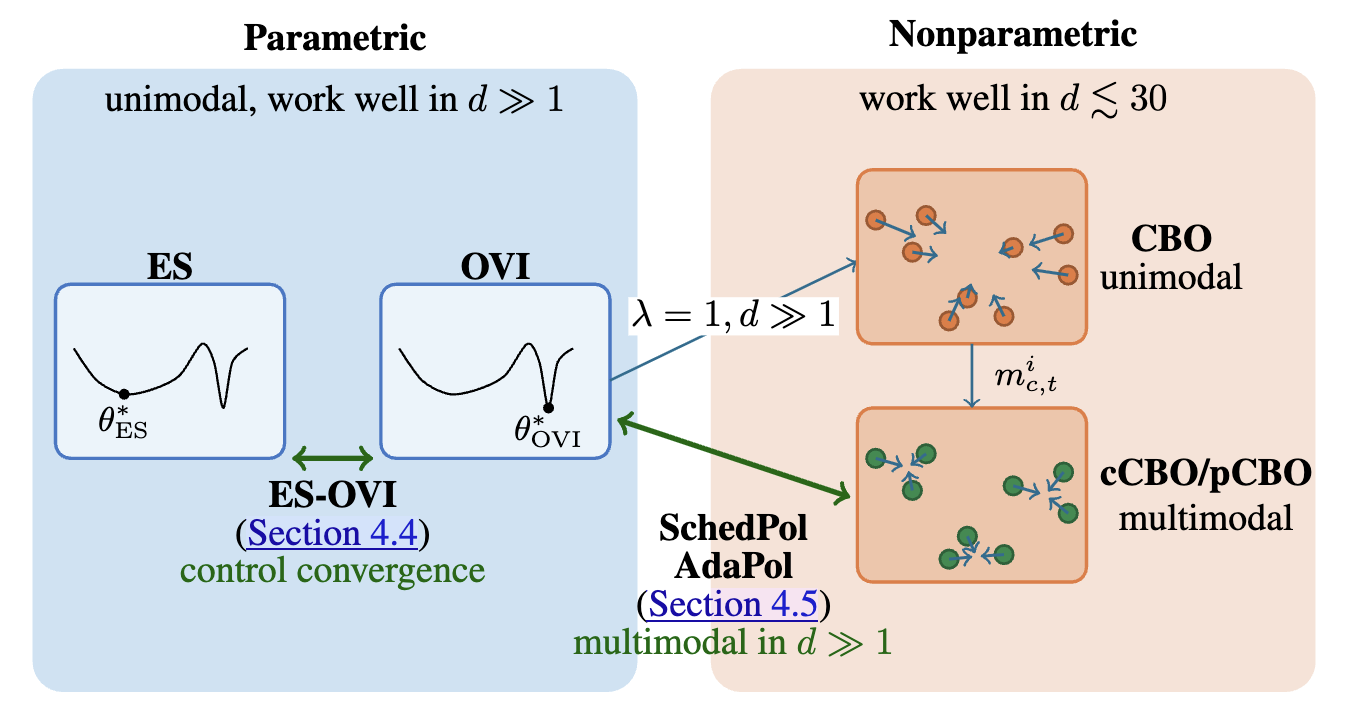

Optimization Dynamics: I also have a side interest in optimization dynamics, which led to an internship paper about Black-Box-Optimizers [ICML2], but also blends into my RL work [ICML1].

-

Applications: I am also interested in applications of general ML. I previously worked on applied ML for optical communication [ECOC] and applied diffusion models for high-resolution image generation [Workshop].

I’m always happy to chat about research, so feel free to reach out by e-mail or socials!

news

| Jun 07, 2026 | Two more workshop paper at ICML 2026! Mitigating Reward Hacking in RLHF via Advantage Sign Robustness and Do Coding Agents Deceive Us? Detecting and Preventing Cheating via Capped Evaluation with Randomized Tests ! |

|---|---|

| May 01, 2026 | Two papers accepted at ICML 2026! Gradient Regularization prevents Reward Hacking in RLHF/RLVR and Bridging Spherical Black Box Optimizers! |

| Jul 08, 2025 | Off-Policy Corrected Reward Modeling for RLHF has been accepted at COLM 2025 |

selected publications

- Bridging Spherical Black-Box OptimizersIn ICML 2026 , Jul 2026